Grounding is what happens when an AI’s output is anchored to something real — a document, a data source, a physical perception, or the external world. The problem is that depending on who’s using the word, “something real” means completely different things. This is one of the most overloaded terms in AI, and that overloading has real consequences for how engineers build, how products are sold, and how safety researchers talk past each other.

Let’s break all three meanings down.

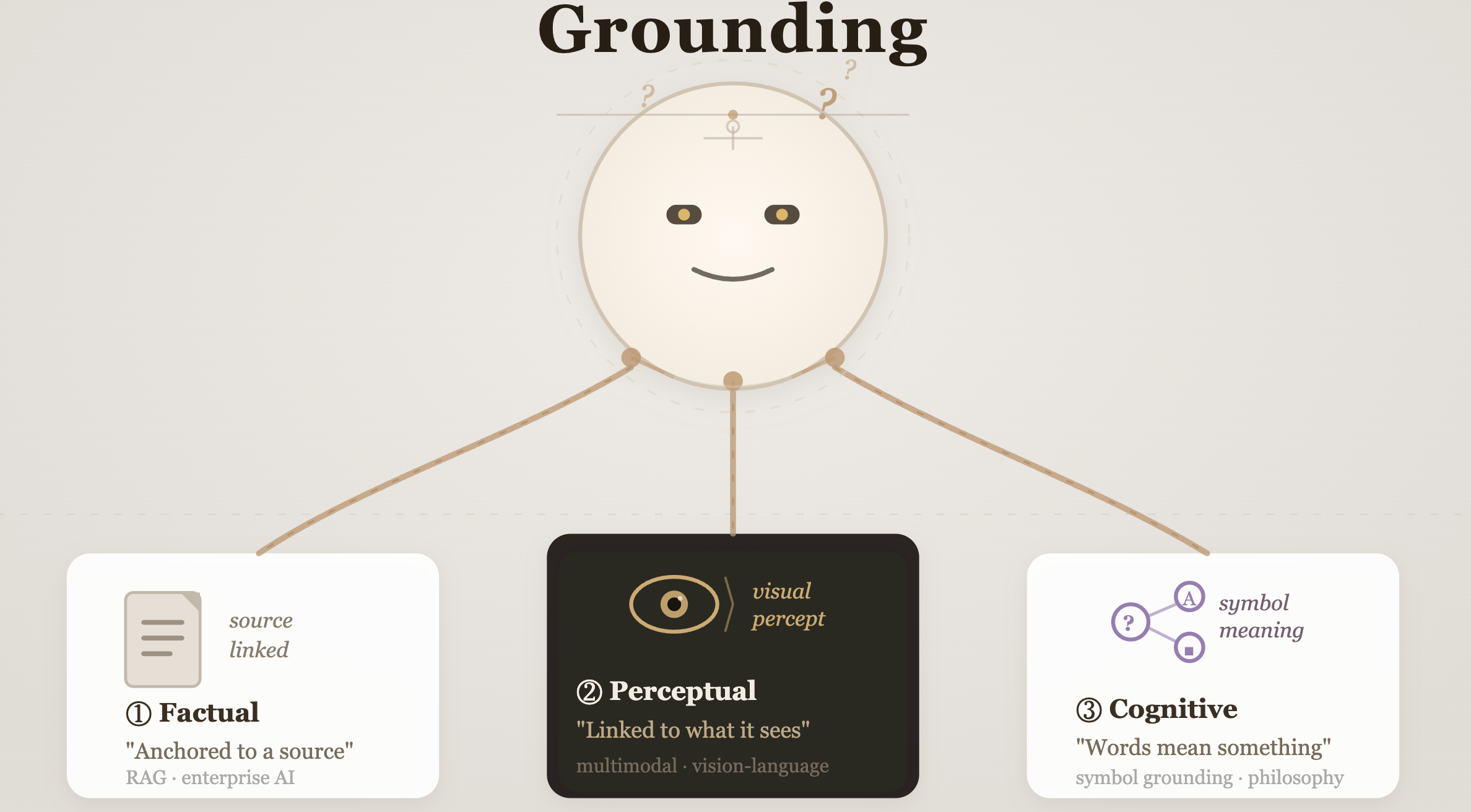

The three definitions of grounding

1. Grounding as factual anchoring (the RAG crowd)

This is the most common usage in 2026, especially in enterprise and product circles. Here, grounding means tying a model’s response to an external, verifiable source — a document, a knowledge base, a database — so it doesn’t confabulate.

RAG is the technical method — the plumbing that connects an LLM to external data. Grounding is the intended outcome: the act of tethering the AI’s response to verifiable facts so it doesn’t hallucinate. While RAG is the journey, grounding is the destination.

This distinction matters. When your vendor says their product is “grounded,” they usually mean the outputs are sourced from documents you provided. But grounding doesn’t guarantee accuracy — it just means the model has a source. Whether it faithfully used that source is a separate question. Frontier models on grounded summarization tasks still hallucinate at rates of 2–5% even when handed the document directly — and performance degrades sharply in long-context or complex reasoning scenarios.

2. Grounding as multimodal perception (the vision-language crowd)

This is the older, more technical definition — and the one researchers mean when they say “visual grounding” or “object grounding.” In this context, grounding refers to the capability of an AI system to connect abstract concepts derived from natural language to specific, concrete representations in the physical world — like parsing the phrase “a person walking a dog” and precisely localizing those entities within an image or video feed.

This is the definition powering models like GPT-4V, Gemini, and Claude’s vision capabilities. When a multimodal model can look at an image and correctly identify which object a referring phrase describes, that’s grounding. It’s less about factual accuracy and more about bridging language and perception.

3. Grounding as meaning (the cognitive science crowd)

This is the oldest definition and the most philosophical — rooted in what’s called the symbol grounding problem, first formalized by cognitive scientist Stevan Harnad in 1990. The question: how does a symbol like the word “chair” actually mean something, rather than just being defined by other symbols?

Grounding is the solution: connecting the symbol “chair” to perceptual data — pixels from a camera, tactile sensor readings from a robot touching a chair — so the symbol becomes meaningful because it is tied to real-world experience.

This is what safety and alignment researchers often mean when they ask whether LLMs are “truly grounded” — i.e., do they understand concepts, or are they just very good at pattern-matching between symbols? The answer, so far, is uncomfortable: while visual-linguistic alignment is learnable at scale, it remains statistically fragile and uneven — most benefiting concrete concepts while failing to support abstract reasoning. No evidence confirms that multimodal training yields understanding categorically superior to unimodal language models.

Why the overloading matters

These three definitions aren’t just academic categories. They produce real confusion in real conversations:

- A product manager says “our model is grounded” and means RAG is running

- An ML engineer says “grounding is still unsolved” and means the symbol grounding problem

- A computer vision researcher says “the model failed at grounding” and means it misidentified the object in the image

All three are correct in their own context. All three are talking past each other.

The most dangerous conflation is the first and third. A critical finding from MIT research: when AI models hallucinate, they tend to use more confident language than when providing factual information — models were 34% more likely to use phrases like “definitely,” “certainly,” and “without doubt” when generating incorrect information. Grounding in the RAG sense reduces this — but it doesn’t solve it. True grounding in the cognitive sense would require the model to actually know when it doesn’t know. We’re not there yet.

So — why should engineers and product leaders care now?

Because “grounded” has become a marketing term. When a vendor tells you their AI is grounded, you need to ask: grounded how? A system with RAG attached is grounded in one sense. A system that reliably knows the limits of its own knowledge is grounded in a much harder, more useful sense. Buying the first and expecting the second is how AI projects fail quietly.

Practical takeaways

- When someone says “grounded,” ask which kind. Is it source-linked (RAG)? Is it perceptually aware (multimodal)? Is it epistemically calibrated (knows what it doesn’t know)? These are different properties with different engineering requirements.

- RAG reduces hallucination — it doesn’t eliminate it. Multiple studies demonstrate that RAG does not eliminate hallucinations — it reduces rates relative to vanilla generation, but the model can still fabricate claims even when given the document. Design your systems accordingly.

- The safest grounding is verifiable grounding. If your AI cites a source, build a pipeline that checks whether the citation actually says what the model claims. That’s the only grounding definition that all three communities would agree on.

Final thought

“Grounding” is one of those words that sounds precise until you need it to actually be precise. The fact that researchers, engineers, and product teams all use it confidently — and mean completely different things — is itself a grounding problem. The word is unmoored from a single meaning. Which is, fittingly, the exact thing grounding is supposed to prevent.